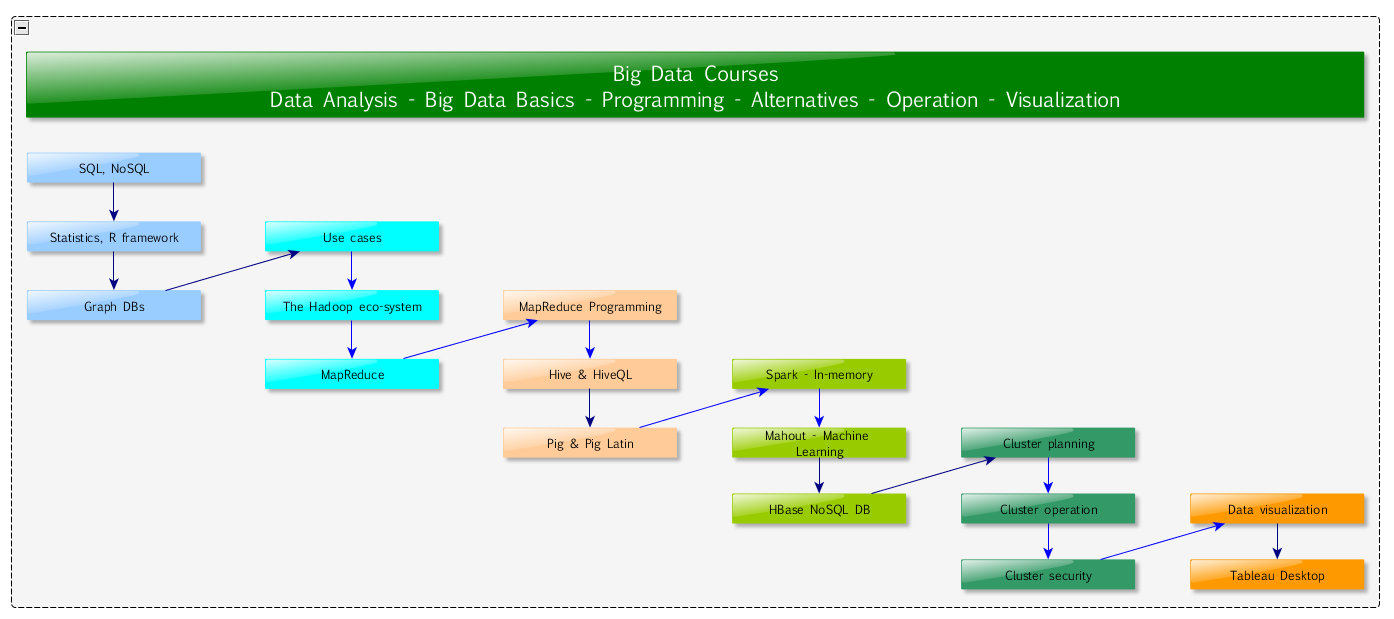

The published diagram is to detail a setup of a Big Data Course.

- Fundamentals on databases (SQL and NoSQL), statistics (the R framework) and graph databases

- The focus is on the Hadoop eco-system and it’s programming paradigm, MapReduce

- MapReduce is available to be used with easier to master high level query languages like Pig and Hive

- While Hadoop is for batch processing there are other usage areas:

- Real-time data access by HBase NoSQL daemon

- Fast but lower data volume processor, Spark

- Machine learning framework that can be run on top of Hadoop: Mahout

- To be able to use these tools a well built, secure cluster is to be planned and developed, then operated securly

- After the data analysis is done, final steps of visualization are detailed – to make an impact by using the achieved analytic results

A derivative of the course is held at the University of Szeged.